PSRO: Policy-Space Response Oracles

Definition

Lanctot, Marc, et al. “A unified game-theoretic approach to multiagent reinforcement learning.” Advances in neural information processing systems 30 (2017).

- multi agents: me vs opponents

- joint action -> rewards + state transition; non-stationarity

- me vs which opponents?

- PSRO will answer this question.

- It provides a general framework, which generalizes independent learning, self-play, and fictitious-play

- self-play: $\sigma_{-i}=(0,0,\ldots,1,0)$, best response to the previous strategy of its own

- independent learning: $\sigma_{-i}=(0,0,\ldots,0,1)$

- fictitious-play: $\sigma_{-i}=(1/K,1/K,\ldots,1/K,0)$ where $K = \vert \Pi_{-i}^{T-1} \vert$

- Meta-strategy $\sigma$ specifies how to sample opponents’ strategies.

- Oracle

- It means the best response to the current population, which is a black box (my understanding)

- $\Pi_i$ is the set of best responses.

- Compute a meta-strategy $\sigma$. This step requires an approximation tool for an equilibrium solver.

- This algorithm is a equilibrium solver.

Alternative Description

Bighashdel, Ariyan, et al. “Policy space response oracles: A survey.” arXiv preprint arXiv:2403.02227 (2024).

The following part is taken from: Li, Wenhao, et al. “Verbalized Bayesian Persuasion.” arXiv preprint arXiv:2502.01587 (2025).

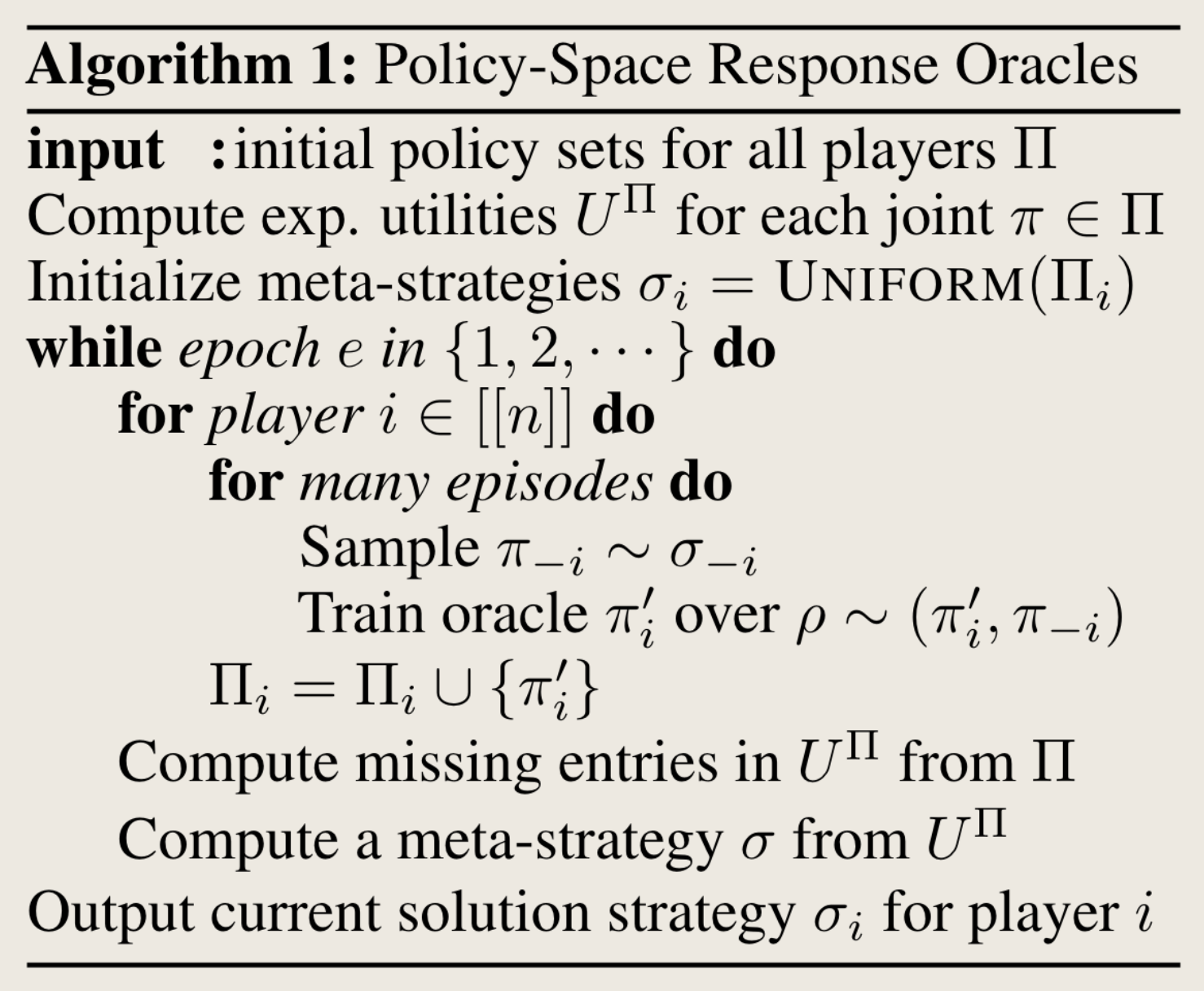

PSRO algorithms begin with an initial set of strategies for each agent and proceed through two alternating steps.

- First, a normal-form meta-game (e.g., matrix game) is constructed, where each agent selects a meta-strategy to represent their overall behavior in the game.

- A meta-solver (e.g., Nash equilibrium solver) then computes a solution (e.g., Nash equilibrium) for this meta-game.

- In the second step, each agent computes an approximate best response to the meta-strategy, aiming to improve their reward assuming the other agents play according to the meta-strategy.

- This process repeats until no agent can benefit by deviating from their strategies

My understanding:

- If the meta-solver is accurate, then the two steps will only execute once.

- Normally we use projected replicator dynamics as a meta-solver.

This post is licensed under CC BY 4.0 by the author.